eXtensions - Sunday 19 March 2023

By Graham K. Rogers

With Silicon Valley companies heavily into staff cuts, Apple has a different approach. Moving operations to India seemed like a good bet for Apple, but now authorities there want to mess with installed apps. Researchers have found another potential way the Apple Watch could help patients. Sony was listed as one of the most ethical companies recently. Does anyone remember the Sony Rootkit? Commentators are still debating the use of AI on Samsung smartphones.

Following recent cutbacks in Silicon Valley, companies like Twitter, Google and Facebook/Meta are still shedding staff. Despite a 10,000 reduction at Meta, many commentators were more concerned that Apple is losing some members of higher management and that there appear to be delays in bonus payments. Meta is to dismiss another 10,000 workers, but Apple is in real trouble, eh?

The last couple of years has seen a total market readjustment in terms of sales as people stayed at home. Now personnel are being shed as projects are not working out as was hoped, particularly at Meta and Twitter, whose CEOs are not always praised for thinking clearly.

Just after having made major commitments to India, it seems as if the shine is going off this new experience pretty quickly. Along with politicians in many countries, those in India like to tamper with the status quo: in this case, pre-installed apps on the iPhone. To be fair, this does affect other smartphones as well, so it is not directly aimed at Apple, but as Jonny Evans (AppleMust) reports, the government there wants to "protect against spying and abuse of user data on the part of smartphone manufacturers." This could also be expected to affect the iPad.

The government is already in talks and is targeting the preinstalled apps, notably Safari and Photos on the iPhone. It gets worse as handset makers will have to submit new devices for testing to the Bureau of Indian standards: a process which can take up to 21 weeks. Expect your new iPhone in April. Evans is disturbed by the potential effect on the digital supply chain, as "you end up with a situation in which an OS in one nation may work very differently than it does in the nation next door" and there is also the threat of state-mandated incompatibility. The whole system could come tumbling down.

Sometimes I curse my Apple Watch when, after some unusually rapid movement, like turning in a doorway, it starts the Fall Detect warning process prior to dialing out having identified a potential fall situation. With the alarm sound, I have been able so far to stop the SOS calls. Of course, if I really did fall down, I might not know and I am grateful for the potential of help being on the way.

Sometimes I curse my Apple Watch when, after some unusually rapid movement, like turning in a doorway, it starts the Fall Detect warning process prior to dialing out having identified a potential fall situation. With the alarm sound, I have been able so far to stop the SOS calls. Of course, if I really did fall down, I might not know and I am grateful for the potential of help being on the way.

The Watch has several warning systems built in to the health monitoring, such as low or high heart rates (both could be problematical) and if there is a warning, the user should monitor things but must also consider qualified medical advice. I monitor my weight daily and after a series of problems I saw that over 3 days I lost 5Kgs. That set off alarm bells and I contacted my doctor, who soon diagnosed the problem. Not the Watch that time, but just one of the ways I use Apple Health on the iPhone and Apple Watch to monitor my own health.

It has been reported that there is another way that the Apple Watch might be able to protect users, in terms of health warnings. MacDaily News links to a report that outlines a check that was done on patients who wore the Apple Watch during a medical visit. Data collected was manipulated [my reading] using AI (machine learning) and it was found to be possible to predict pain levels in patients with sickle cell anemia.

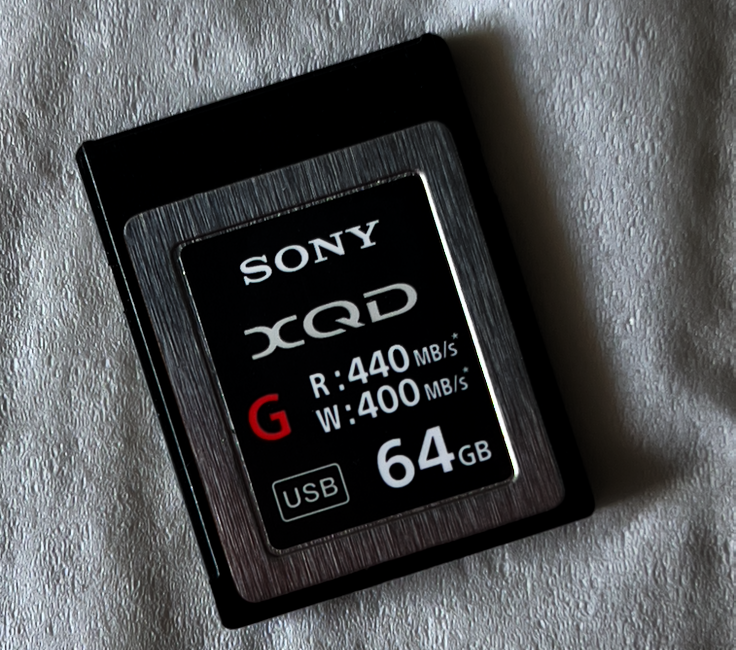

In my early morning newsfeeds I noted a story from PetaPixel (Jaron Schneider that had Sony listed as one of the world's most ethical companies. This was in a list produced by Ethisphere that has been going since 2016 and which includes "a 200-point assessment, documentation review, and independent research into an organization's reputation and ethical practices." I guess they missed the Sony Rootkit from 2005 when software was included on over 20 million CDs that modified the OS on PCs without telling the user. With the restrictions on OS X (now macOS), attempts to install this rootkit on Macs needed user permission. Some exploits using the rootkit vulnerability were later found on PCs.

Since that time, I have only bought one Sony branded item: a memory card when I bought the Nikon D850. As Nikon was using the XQD cards that were a Sony standard, I had no choice then, apart from running with lower speed SD cards. The rootkit cost Sony a lot in terms of money, legal problems and user trust. The Wikipedia article has a reasonable summary. Ethical Sony eh?

Schneider notes that although Apple, Dell, HP, and Western Digital are included, "[n]either Nikon nor Fujifilm" have ever appeared on the list, while Canon which had appeared for the last 5 years was no longer listed.

Last time I mentioned comments about the way Samsung appears to have used AI to produce exceptional images of the moon from relatively poor input. Users managed to produce similar results from degraded images and concluded that, instead of the camera, the output was in-device AI using images that it had been trained on. My iPhone screenshot at the right shows a slightly less crisp image of the moon.

AI is not really intelligent at all. You just have to keep feeding it with a diet of images (or text depending on the purpose) and it uses that training information - usually thousands of images - to produce a result. This is not at all like a human: we have analytical and deductive capabilities far above what AI has. And humans can dream. The killer AI computer, HAL, in Stanley Kubrick's 2001: A Space Odyssey, asked about dreaming (in 2010: The Year We Make Contact when Dr. Chandra shut it down).

AI is not really intelligent at all. You just have to keep feeding it with a diet of images (or text depending on the purpose) and it uses that training information - usually thousands of images - to produce a result. This is not at all like a human: we have analytical and deductive capabilities far above what AI has. And humans can dream. The killer AI computer, HAL, in Stanley Kubrick's 2001: A Space Odyssey, asked about dreaming (in 2010: The Year We Make Contact when Dr. Chandra shut it down).

I have advised some groups of students on projects that were based on AI detection (cancer and Covid) as ways to assist doctors, particularly in rural areas where sophisticated medical imaging technology would not be available. They would only have X-Rays to work with. By training the software with thousands of images that did (and did not) show problems, the doctor could be made aware of a possible indication and order further tests.

Although Samsung had provided some explanation of the processes involved on its Galaxy, it was stung into producing an English version of the explanation after several sources began questioning the ethics. Jarod Schneider (PetaPixel) had already commented on the output from a new Canon and was not sure where the line should be drawn. He also comments on the Samsung PR release.

Samsung denies that it is providing any image overlaying onto the original image with its AI-based Scene Optimizer and notes that the feature can be disabled, adding "After Multi-frame Processing has taken place, Galaxy camera further harnesses Scene Optimizer's deep-learning-based AI detail enhancement engine to effectively eliminate remaining noise and enhance the image details even further. . . ", which does not really answer the question.

Schneider writes that this ability to add detail that is not visible is the crux of the matter. Is this too much processing? He thinks that what appears to be excessive processing by Samsung may lead to some negativity from users when these smartphone-like features are added to cameras, such as the Canon's Creative Mode he had earlier discussed.

Also looking at the moon image that Samsung has used as an example of superior processing, Wasim Ahmed at FStoppers is similarly questioning the ethics: what makes a photograph? In his article he is quite clear that "no cell phone should be able to make moon photos this clear with the hardware they are packing." That for me is the line that has been crossed. Like Schneider and Ahmed, however, I am prepared for at least some post-editing AI use.

Also looking at the moon image that Samsung has used as an example of superior processing, Wasim Ahmed at FStoppers is similarly questioning the ethics: what makes a photograph? In his article he is quite clear that "no cell phone should be able to make moon photos this clear with the hardware they are packing." That for me is the line that has been crossed. Like Schneider and Ahmed, however, I am prepared for at least some post-editing AI use.

I mentioned the clever Repair tools in Apple Photos and Pixelmator Photo in recent comments as an example. There is also HDR (which I do not use), but I do occasionally use focus stacking with Helicon Focus that combines multiple images taken with the Focus Shift Shooting option.

However, with the Samsung smartphone, as several commentators point out, it is not the hardware, but the in-device processing, using AI to turn a sow's ear into a silk purse. The lines are still blurred.

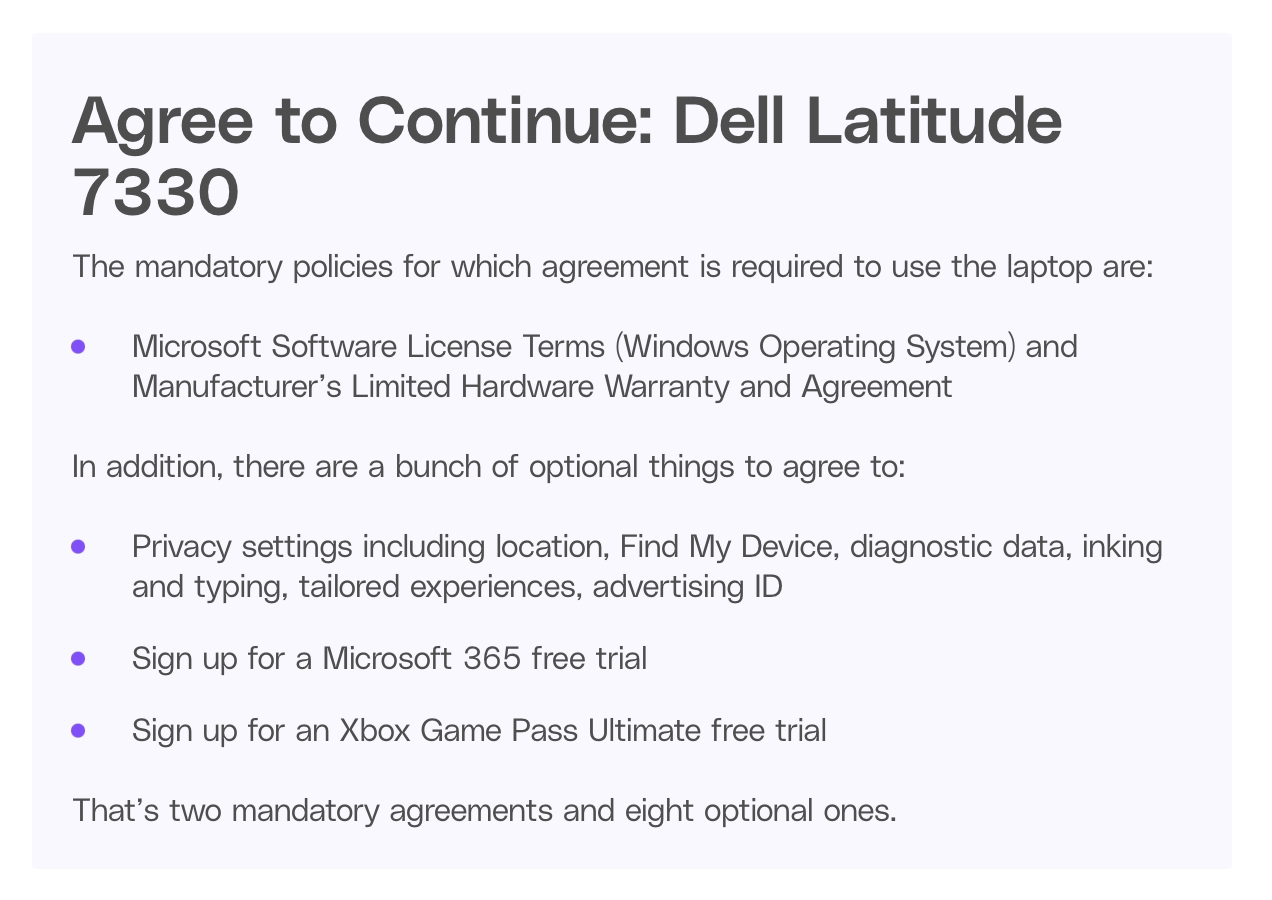

This week I saw a review of a new Dell Latitude 7330 computer by Monica Chin on The Verge. It was thin and light. As someone who has been quite satisfied with the MacBook Pro and MacBook Air computers I have used in the last 5 or so years (Intel and M1), I read this with some surprise. It was subtitled, This isn't a bad laptop, but it is a bad deal.

With what I read I could not really call it a good laptop and, as she rightly suggested, at the price this was not a good deal. With a $2000 computer users were expected to put up with battery life of about three and a half hours. But it was thin and light. It does not end there: when the computer was being pushed to the edges of its performance, there was (she writes) a "visible slowdown". With all this, she accepted that she could "recognize the value of a device like this". I am afraid it was beyond me.

I must admit I was hard pressed to see anything I would want, especially with the number of agreements a user had to click on before being allowed to use the device, such as Microsoft stuff, privacy settings, more Microsoft stuff, an XBox trial. Two mandatory agreements, she writes and eight optional ones. The reader comments were not exactly glowing recommendations. But it was thin and light.

Graham K. Rogers teaches at the Faculty of Engineering, Mahidol University in Thailand. He wrote in the Bangkok Post, Database supplement on IT subjects. For the last seven years of Database he wrote a column on Apple and Macs. After 3 years writing a column in the Life supplement, he is now no longer associated with the Bangkok Post. He can be followed on Twitter (@extensions_th)

For further information, e-mail to

Back to

eXtensions

Back to

Home Page