|

|

Weekend Notes: iPadOS may be Delayed; Restrictions on PayPal Accounts in Thailand; Samsung Repair Mode for Phones; Keyword Warrants and Minority Report; Cowardly Apple and CSAM DetectionBy Graham K. Rogers

It is reported that there are some problems with making the feature work properly (the Apple demo showed the potential) and Apple is likely to hold a feature back if they are not satisfied that it works properly. Gurman also dangles the potential of new iPads which would make another sensible reason for such a delay.

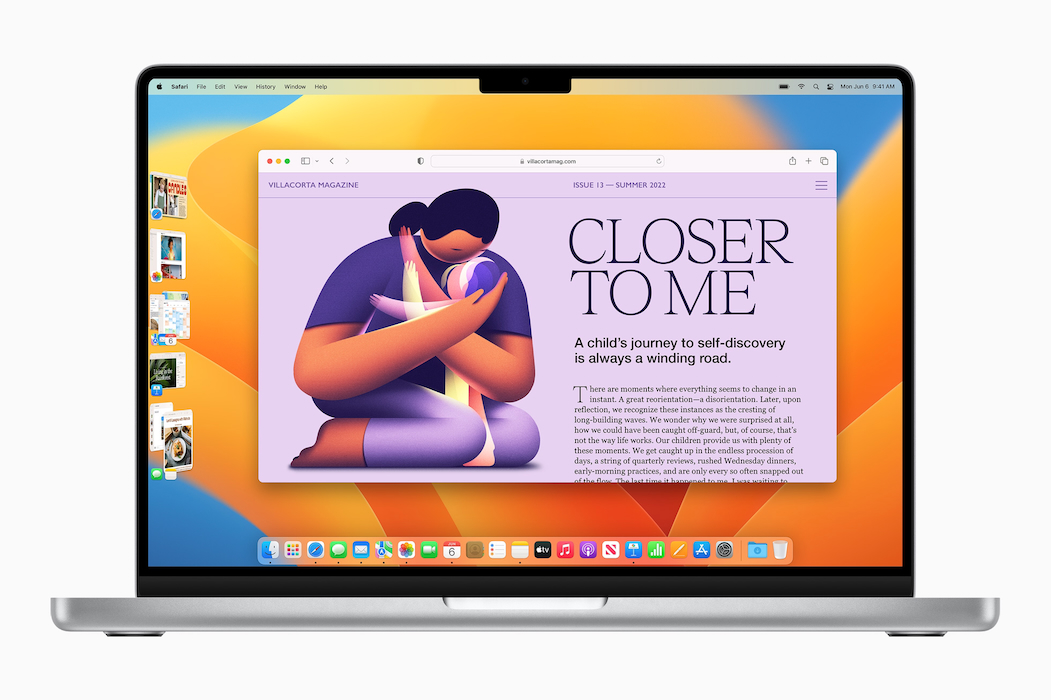

Stage Manager running in Ventura on a Mac - Image courtesy of Apple

Many developers have produced versions of their software that have been optimized for Apple silicon and now Microsoft has updated Teams to provide for this. The update is now available and apparently provides performance improvements too (Jonny Evans, AppleMust). I do not have Teams on the Mac but it is on the iPadPro and I note it was last updated on 30 July. There is no mention in the update information of any such changes, so this is only for the Mac.

Inconvenience for UsersPayPal is making changes to accounts in Thailand. I understand that governments worldwide are tightening up on the ways in which money changes hands online, although they do not seem to make too much effort with those who use offshore accounts. As part of the changes here, users "will need to be enrolled in the National Digital ID platform to complete the identity verification process, in advance of the account transfer to PayPal Thailand later this year." All well and good, but this is restricted to Thai nationals, so anyone who lives and/or works here like me - and there are several - is between a rock and a hard place.

As online purchasing has become fairly significant these days, PayPal has been a convenient way to transfer funds. I buy books, film and several other purchases regularly online and the ability to do this quickly and seamlessly is of great help. In some cases I use a credit or debit card (I know the difference) and if PayPal does not offer any alternatives, I would expect to increase that. I just added an alternative to my eBay account. In some cases, it is easy as details are recognised when I make frequent purchases (food and coffee online from local sources), while with Apple I use the credit card.

Our personal information should be just that: personal and private, which is why there is such concern when the authorities try to fudge the rules (see below). Long before home computers and smartphones, I was a policemen and it was known that personal diaries and letters might be brought into evidence either for the details they included, or to demolish an alibi. Only write down what you are comfortable with the world seeing. The same goes for images on devices we use. Nonetheless, like videos that invade privacy, these photographs should never have been viewed, let alone shared by the Pegatron technicians and Apple's comments - when we learned of this egregious violation of our policies at one of our vendors in 2016, we took immediate action and have since continued to strengthen our vendor protocols - only goes part of the way to correcting this. Samsung has a new solution that I hope Apple will copy. Jaron Schneider (PetaPixel) reports on a (currently) South Korea-only initiative, with a "repair mode" for its Galaxy S21 phones. This keeps photos and data hidden from repair technicians and assures privacy. Once it is in Repair mode, no one can access messages as well as photos, and accounts and can only use the default installed apps. Samsung plan to expand this feature to other smartphones and it would make sense for Apple to follow suite.

Photo Library - on iPad Pro

Fishing for KeywordsAlthough the information on our devices is private, there are some cases in which law enforcement are able to access this legally: using warrants. While there have been some cases when these have been rejected by companies like Apple and Microsoft, and in some cases judges have refused to endorse warrant requests. A look in Terms & Conditions will show that users sign up to accepting this action by companies that hold the data. While iCloud secures information by encrypting it when in transit, storing it in an encrypted format, and securing encryption keys in Apple data centers, iCloud backups are unencrypted so available for a legally served warrant.

Obtaining a warrant was not particularly easy when I was a policeman and a reasonable amount of evidence would have to be presented to a judge (or magistrate) before this could be issued. There are several types in the UK (firearms, stolen property, security et al). The USA has its own parameters and guidelines, with digital searches a relatively new type that can reveal messages, images, web history, spreadsheets and other documents, as well as revealing metadata. Law enforcement sometimes tries to push the boundaries of the law and that is an excellent reason for the existence of courts and learned judges. They put the brakes on; or should do. Albert Fox Cahn and Julian Melendi (Slate) report on a new type of search, that seems to be more akin to a fishing expedition: the keyword.

Fishing in murky waters

The Slate article points out that Google search history of individuals has been used for several years and that is understandable: showing intent, mens rea (intention or knowledge of wrongdoing) which could be of use in preventing criminal activity or in support for a conviction. The wider sweeping use of keyword warrants skips a step, "transforming a targeted (albeit invasive) search of one person into a search of us all." This infers intent before the user has formed any criminal plan. If you have seen the movie Minority Report, you would understand how this could work out. In relation to this and the use of AI to discover potential offenders online, Levy and Robinson (see following section), write,

Without exculpatory information, for example from content of the conversation, there is no way for either the platforms or law enforcement to discriminate those accounts they should investigate and those that they should not. This is likely to lead to a significant number of people being 'accused' of doing terrible things by a machine learning algorithm, with effects ranging from removal of their account through to law enforcement intervention. We believe that if implemented as suggested, AI and ML running on metadata alone will be unlikely to assist law enforcement in stopping offenders and safeguarding victims, but will likely lead to a chilling effect on free speech, and infringement on user privacy. The bold text was in the original. They add, "AI analysis using metadata available on the server will not (on its own) provide accurate detection of most of the harm archetypes we have described in most probable scenarios."

Cowardly AppleI was slightly annoyed a few days ago by what seemed initially to be somewhat arrogant comments by Professor Hany Farid (Alex Hearn, Guardian) who had said that Apple ought to bring its CSAM image scanning into operation. Many privacy advocates warned that the hash system that was used to identify images on device might be used to identify other targets, such as those whom certain states deemed criminals, with Apple then being forced to search and identify any related images of those people. Several states in the past have had lists of "undesirables" or enemies of the state, including the former Soviet Union, China, Nazi Germany and other countries.There is now increasing pressure, particularly in the United States, Europe (EU), the UK (Britain) and elsewhere to pass legislation that would combat child abuse. Some of the planned laws are controversial and most countries already have wide-ranging laws that cover such crimes. With the legislation imminent, Professor Farid, who had developed the image hashing technique in 2009 with Microsoft, says that Apple should revive its plans for CSAM scanning. It is already used by Microsoft, Google, Facebook, and others, but not Apple. I began writing a lengthy comment, starting with the dismissal of fears of many privacy activists by Professor Hany Farid in what had seemed a patronizing attack reported in the Guardian (Alex Hearn). Following recent comments (Alex Hearn, Guardian) that could not have been coincidental from Ian Levy, the NCSC's technical director, and Crispin Robinson, the technical director of cryptanalysis - codebreaking - at GCHQ on client side scanning (which is what Apple had put forward last year), Professsor Farid wants all the online services to do this. I think he is wrong with his view about the criticism that Apple received, "from a relatively small number of privacy groups." He added that while many would have gone along with the proposal from Apple, "a relatively small but vocal group put a huge amount of pressure on Apple and I think Apple, somewhat cowardly, succumbed to that pressure". Many disagree.

Photo-editing histogram

Imagine an electronic camera as laying a fine grid over an image and then recording the level of gray it sees in each cell. If we set the value of black to be 0 and the value of white to be 255, then any gray is somewhere between the two. Conveniently a string of 8 bits has 256 permutations of 1s and 0s, starting with 00000000 and ending with 11111111. With such fine gradation and with a fine grid, you can perfectly reconstruct the picture for the human eye. I use this in teaching, but with my second language students I help with an introduction and some suitable images. It takes a while but sooner or later a couple of groups will assign values to the white and black squares and the idea falls into place. Those PhotoDNA grid squares have values of a far more complex and different nature assigned and then combined to give a value for the whole image.

Cat and wine; Cat enlarged showing pixels (right)

The paper of Levy and Robinson is over 70 pages long, although the Abstract and Executive Summary help a reader to gain a fairly quick insight into the problem. The authors cite seven archetypes of abusers:

Particularly with some of the worst types, offenders go to great lengths to hide their activities and this is where I begin to question the motivation behind the insistence that Apple should embrace on-device CSAM scanning. If a user is aware that Apple (like several other services) is scanning for such images that person would avoid putting images on the iPhone (as well as Photos and iCloud services). Maybe that is what Apple wants.

The Wikipedia entry on PhotoDNA is helpful with regard to sources although several cited are no longer available (or blocked in one case) and included the comment that in 2017 Hany Farid had suggested using the hash technique to remove terror-related imagery (e.g. beheading photos and videos), which immediately shows that hashing can be used for images other than CSAM detection. This was one of the major criticisms when Apple put forward the idea of on-device CSAM detection. It is less easy to dismiss this if the developer himself suggests that expansion is possible.

I am left with the nagging question about why there is this name-calling in the push to have Apple activate the on-device identification of images now when so many (rather than a small minority) warn about the potential risk to privacy along with the additional risk - as Professor Hanid must be aware - that the technology could be misused.

Graham K. Rogers teaches at the Faculty of Engineering, Mahidol University in Thailand. He wrote in the Bangkok Post, Database supplement on IT subjects. For the last seven years of Database he wrote a column on Apple and Macs. After 3 years writing a column in the Life supplement, he is now no longer associated with the Bangkok Post. He can be followed on Twitter (@extensions_th) |

|

With the move to online working in the last couple of years, while Zoom seems to have taken the "Best of" awards, others like Cisco Webex and Microsoft Teams provided many people with reasonable online working situations. The choice usually depended on the organization, although some would provide more than one option to users. When Apple released its Apple silicon-based computers, there were software solutions to ensure that applications would continue to work even though developed for Intel hardware.

With the move to online working in the last couple of years, while Zoom seems to have taken the "Best of" awards, others like Cisco Webex and Microsoft Teams provided many people with reasonable online working situations. The choice usually depended on the organization, although some would provide more than one option to users. When Apple released its Apple silicon-based computers, there were software solutions to ensure that applications would continue to work even though developed for Intel hardware.

In the FAQ provided, the answers to several questions are the same: "PayPal is using the NDID platform as a sole means of verifying the identity of customers seeking to use PayPal Thailand personal accounts (including existing account holders whose accounts will be transferred to PayPal Thailand). A Thai national ID is currently required to enroll in NDID." That is in accordance with the information on the page provided by the

In the FAQ provided, the answers to several questions are the same: "PayPal is using the NDID platform as a sole means of verifying the identity of customers seeking to use PayPal Thailand personal accounts (including existing account holders whose accounts will be transferred to PayPal Thailand). A Thai national ID is currently required to enroll in NDID." That is in accordance with the information on the page provided by the

There may be accidental image acquisition. Alex Jones claimed this after it was found in a defamation trial that concluded this week: as well as lying about communications, there are reported to be child abuse images on his phone. He claims they were sent to him and he never looked. Apple does have a threshold number (believed to be about 20) before any action is taken, so a person with a couple of such images may not be affected.

There may be accidental image acquisition. Alex Jones claimed this after it was found in a defamation trial that concluded this week: as well as lying about communications, there are reported to be child abuse images on his phone. He claims they were sent to him and he never looked. Apple does have a threshold number (believed to be about 20) before any action is taken, so a person with a couple of such images may not be affected.